AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Residual statistics1/27/2024

Though, there are methods for dealing with heteroscedasticity. This assumption CANNOT be replaced by the assumption of a large sample size.

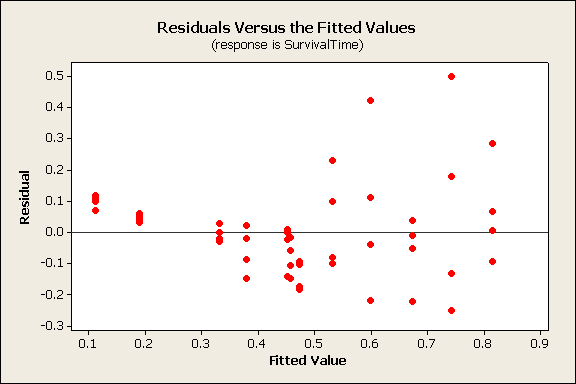

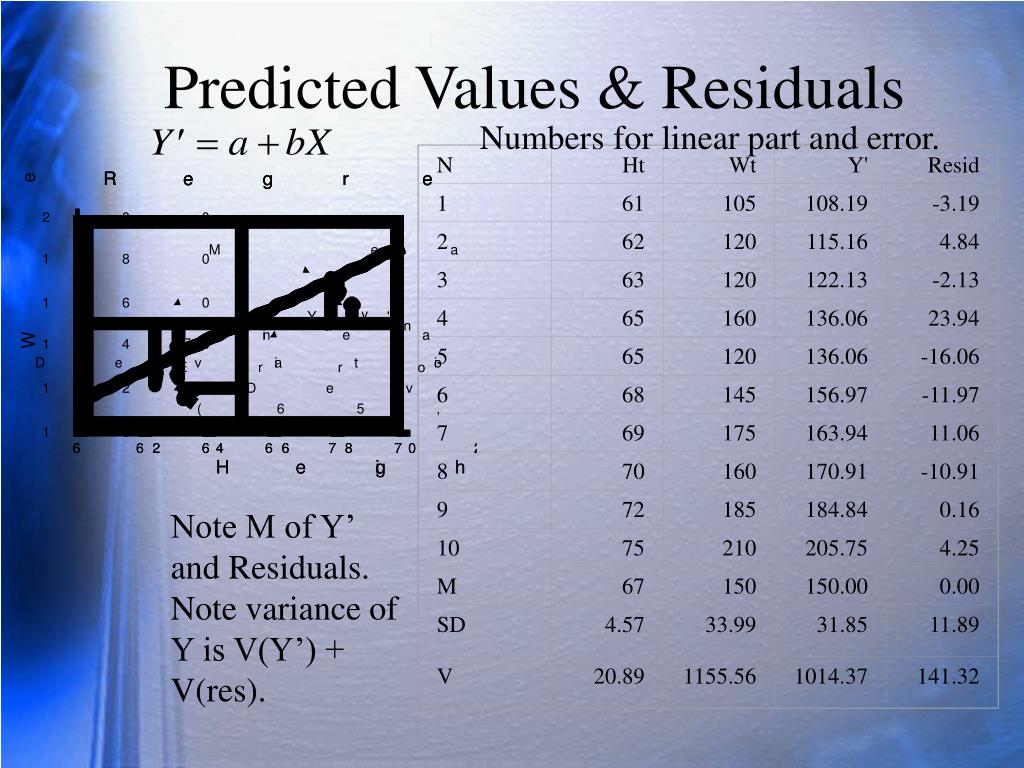

If the model exhibits heteroscedasticity, then our t statistics do not have t distributions, and our f statistics do not have f distributions and thus, our statistical inference is no longer reliable. This assumption is for the purpose of statistical inference, but it is not crucial: this assumption can be replaced by the assumption of a large sample size.Īssumption (2): We assume that the error $\epsilon$ has the same variance given any value of the explanatory variables. While errors are unobservable, residuals are observable: we can calculate residuals that is, we can calculate the difference between each of our y values and their corresponding fitted values that lie on the regression line.Īs for the aforementioned assumptions, two important ones of the Classical Linear Model Assumptions are (1) Normality of Error Terms and (2) Homoscedasticity.Īssumption (1): We assume that the unobserved factors are normally distributed around the population regression function. Since we do not observe errors, we resort to looking at residuals, which can give us an idea about the underlying errors. Understanding the error term is useful in OLS because it is the subject of many of our important assumptions. Figure 10.5 shows the ICC.The disturbance, or error term, represents factors other than x that affect y. Item 16 in Table 10.1 has an infit MS great than 1. We will look at infit values instead of outfit values for the following discussions. What the fit MS detect is whether the observed ICC is steeper or flatter than the theoretical ICC. Library(knitr) kable(fit2 $fit.item, digits= 3, align= "ccccc", caption= "Item fit statistics for CTTdata", row.names= FALSE) Table 10.1: Item fit statistics for CTTdata item In contrast, when sample size is 500, the asymptotic standard error is 0.063, so the range for accepting items as fitting the model is 0.87 and 1.13. As an example, when sample size is 2000, the asymptotic standard error is 0.032, so the range for accepting items as fitting the model is 0.94 and 1.06. Where \(N\) is the sample size of students. 15.5 Using Plausible Values to compute mean estimates and standard errors.15.4.2 Replicate weights and standard errors.15.4 Sampling weights and Replicate Weights.15 Using Plausible Values to Analyse PISA data.14.11 Computing statistics and standard errors using plausible values.14.9 Why plausible values are better than WLE and EAP for population estimates.14.8 Expected a Posteriori (EAP) - The Average of PVs for Each Student.14.7 Do NOT average multiple PVs first for each student.14.3 Graphical Display of Prior, Posterior Distributions and Plausible Values.14 Estimating Population Characteristics - Part II: Plausible Values.13.11 Further Terminology - Posterior distribution.13.10 What is the difference between JML and MML estimation methods?.13.9 Exercise 2 - Directly estimate mean and variance using MML estimation method.13.8 A better method for estimating population mean and variance.13.7 Overcoming the problem of inflated variance estimates.13.6 Exercise 1 - Checking the effect of measurement error.13.4 How to estimate population statistics.13.3 Sampling error and Measurement error.

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed